AI Judges: Why Zero-Trust Evaluation Is the Enterprise Standard Your AI Platform Is Missing

What it actually means to be enterprise-grade in the age of agentic AI, and why most platforms have not caught up.

There is a phrase that gets used so frequently in enterprise software that it has nearly lost all meaning: "enterprise-grade." Every vendor claims it. Few define it rigorously. And in the context of agentic AI, where autonomous systems are making decisions, generating content, and taking actions on behalf of marketing organizations, the gap between the claim and the reality has become difficult to ignore.

At Kana, we think about enterprise readiness through a specific lens. If an AI system cannot explain, trace, and defend every output it produces, it is not ready to operate inside a marketing organization that answers to a board of directors.

That conviction is why we built AI Judges.

The Hallucination Problem Is a Trust Problem

LLM hallucinations are not edge cases. They are the default behavior of probabilistic language models operating without constraints. Every foundational model, regardless of parameter count or benchmark performance, will generate outputs under certain conditions that are plausible-sounding and completely wrong.

The typical industry response has been to layer prompting strategies, retrieval-augmented generation, and post-hoc fact-checking on top of existing architectures. These are useful techniques, but they are insufficient for enterprise deployment because they treat evaluation as an afterthought rather than a structural requirement.

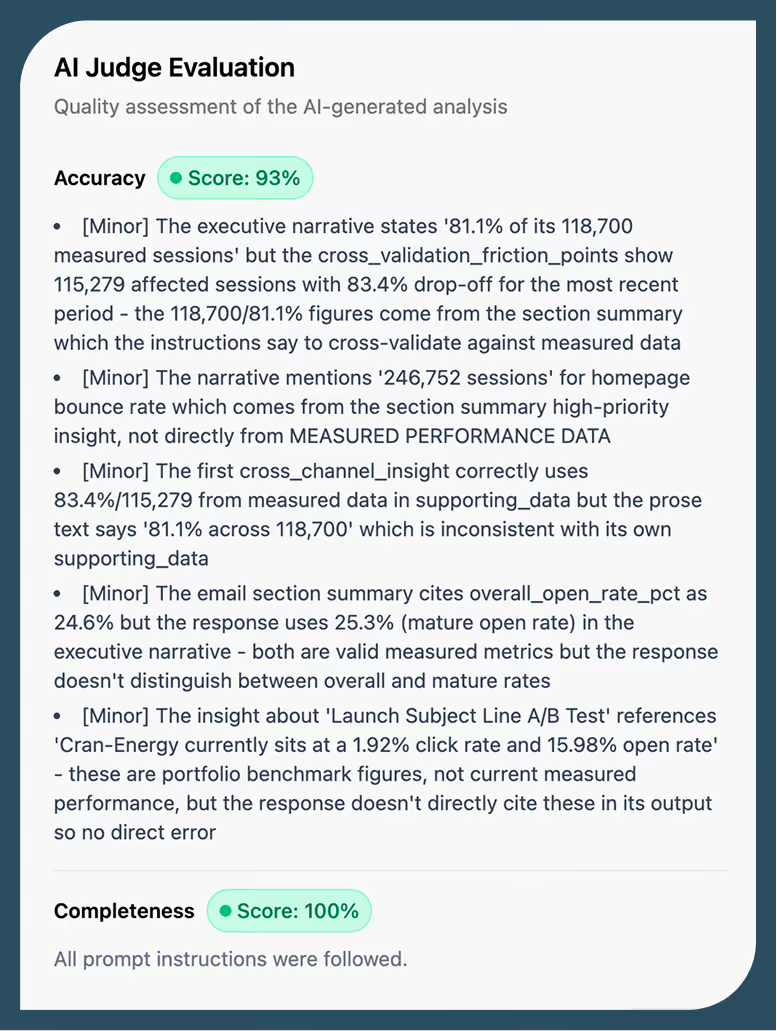

AI Judges take a different approach. Borrowing from the security concept of zero trust, we start from the assumption that no LLM output should be trusted until it has been independently evaluated. Every response generated within the Kana platform passes through a judging layer that scores accuracy, validates completeness, and traces every data input that contributed to the result.

What Zero-Trust Evaluation Looks Like in Practice

When an agent in the Kana platform generates an output, whether that is a campaign recommendation, an audience segment, or a creative brief, AI Judges intercept that output before it reaches the end user. The judging process evaluates three dimensions.

- First, accuracy: does the output align with the source data and context that was provided?

- Second, completeness: does the response address the full scope of the query, or has the model taken shortcuts?

- Third, traceability: can we reconstruct exactly which data, prompts, and reasoning steps produced this particular result?

Every evaluation is logged in a searchable audit trail. Every agent action and reasoning event is recorded. This is not a debugging tool. It is a governance layer. When your CMO asks why a recommendation was made, or your compliance team needs to understand how an insight was generated, the answer is immediate and complete.

The Human in the Loop Is Not Optional

There is a temptation in the agentic AI space to frame full autonomy as the end goal: systems that run without human oversight, making decisions at machine speed. We believe that framing is premature, particularly for enterprise marketing.

Kana’s philosophy is augmented intelligence: AI that amplifies human judgment rather than replacing it. AI Judges are the technical embodiment of that philosophy. By ensuring every output is evaluated, scored, and traceable, we give marketing teams the information they need to review, coach, and direct their AI systems in real time.

This is not about slowing things down. It is about building the trust infrastructure that allows you to move faster with confidence. A marketing leader who can see exactly how an insight was generated will greenlight execution in minutes. A marketing leader looking at an unexplainable output from a black-box system will, correctly, hesitate.

Enterprise-Grade Means Accountable by Default

When we evaluate whether an agentic AI technology is truly enterprise-ready, the checklist should start here: Is every output explainable? Is every decision traceable? Can the system prove its own work?

AI Judges are not a premium add-on in the Kana platform. They are built into every application by default. We made that architectural decision deliberately, because governance should not be something you remember to turn on. It should be something you would have to work to turn off.

The foundational models will continue to improve. Context windows will expand. Benchmarks will climb. But benchmarks are vanity metrics for foundational models, easily gamed and rarely representative of real-world performance on your data, in your domain. What matters is whether the system you deploy can be held accountable for what it produces. That is the bar for enterprise-grade AI, and it is what AI Judges deliver.

If you are evaluating agentic AI platforms for your marketing organization, we would encourage you to start with one question: can it explain its own answers? The answer will tell you a great deal about whether the platform is ready for the enterprise.